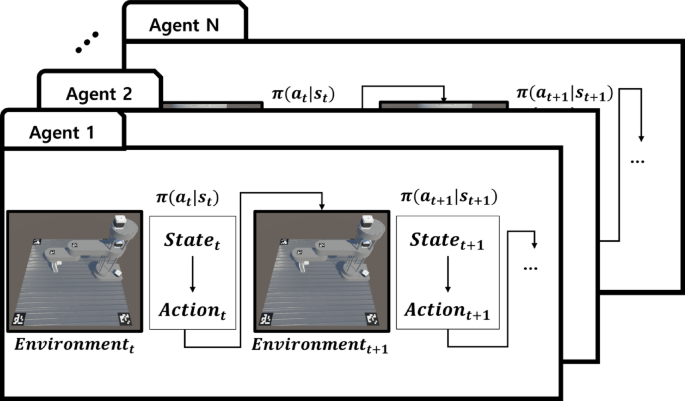

This study proposes an adaptive control system capable of quickly and accurately diagnosing and responding to various fault scenarios in robotic systems. To achieve this, a digital twin system that integrates physical data into a virtual environment was developed. The system combines a fault diagnosis model with a RL–based control model, enabling real-time fault responses. This section provides an overview of the learning and application processes of the proposed system and introduces the implementation of each module.

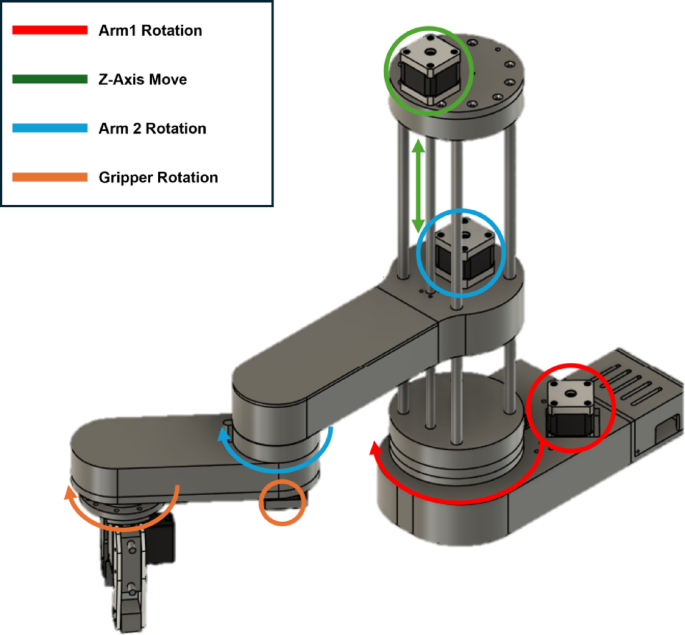

Figure 1 shows the structure of the robot used in this study. The positions of each motor and drive axis are indicated, and the fault diagnosis and control commands are designed based on this structure. Physical properties such as the robot’s weight and the direction of the drive axes, are accurately reflected within the virtual environment.

Robot structure overview. Diagram illustrating the robotic structure used in this paper, with key components such as motors and drive axes labeled for fault diagnosis and control28.

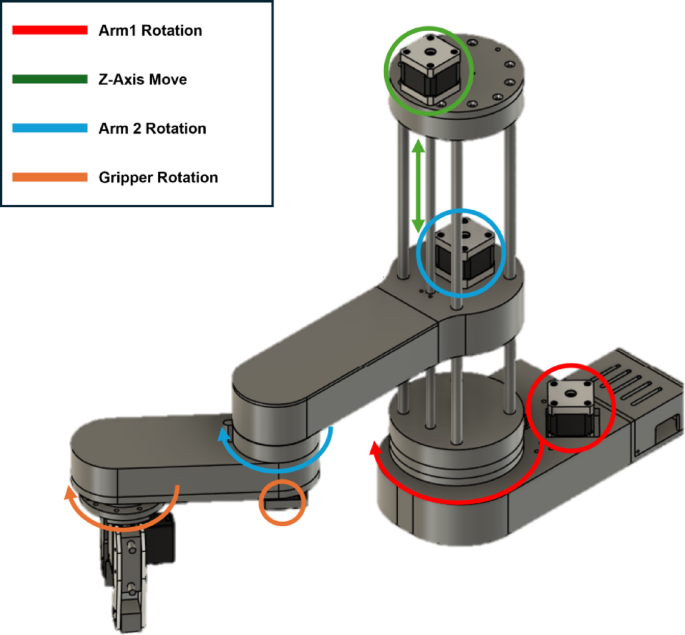

Figure 2 illustrates the overall architecture of the proposed system, which incorporates a self-learning control process that allows the model to autonomously improve its response to faults by continuously adjusting the control policies to optimize performance across diverse conditions. The system is organized into two main scenarios, detailed as follows: The first mode is the training mode, which focuses on learning in a virtual environment. As shown in Fig. 2a, this mode involves collecting fault data from motor components at the part level, selecting and training state-diagnosis models using a unified SDM mapping file, and constructing a virtual environment that reflects the physical properties of the system. Scene randomization techniques are applied in this environment to simulate a variety of fault scenarios, enabling the development of adaptive control models. Similar simulation-driven optimization approaches have also been demonstrated in computational engineering domains, validating the feasibility of digital -twin-based adaptive control frameworks29.

Overall framework of proposed system. In training mode, a digital twin is constructed using the SDM framework to train the control module. In deployment mode, the trained module is applied to the actual robot.

The second is the deployment mode, in which the trained models are implemented in a real robotic system. Figure 2b shows the deployment mode. In this phase, real- time sensor data enables state diagnosis, and the diagnosis results, such as fault states, are used as inputs to the adaptive control module to perform self-learning control by adjusting the optimal control policies. To address potential coordinate mismatches between the virtual and real environments, calibration is achieved through marker-based homography matrix transformations, ensuring precise control command execution. These software-defined self-learning control processes ensure that the system can maintain reliable and efficient operations, even under a variety of fault conditions.

These software-defined self-learning control processes ensure that the system can maintain reliable and efficient operations, even under a variety of fault conditions.

Reinforcement learning-based self-adaptive control module

RL is widely used across various industries for tasks such as safe path exploration and optimization in complex environments30,31. Previous studies have utilized RL to solve problems such as robot path planning and autonomous driving, where RL algorithms learn optimal policies to achieve specific goals within an environment32,33. In contrast to traditional applications, the proposed method focuses on real-time adaptive control under fault conditions. Specifically, it aims to develop control policies that allow the system to adapt quickly to faults and apply these policies to real robot operations.

The RL-based adaptive control module was closely integrated with the fault diagnosis model. During the training phase, various fault scenarios are applied to the virtual environment, enabling the RL agent to effectively learn how to respond to different fault conditions. For example, when the system detects an overcurrent, the action switches to low-power mode. In the case of a worn belt, the RL agent applies additional torque control commands to maintain stability. Fault parameters were provided to the virtual environment, allowing the agent to learn suitable actions for each scenario. This process equips the system with control policies that are capable of responding quickly to unexpected faults.

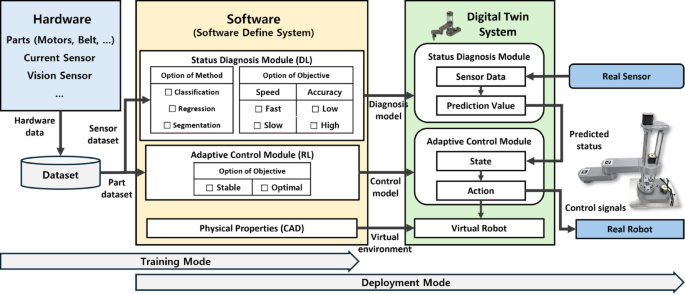

Figure 3 illustrates the learning process of the RL agent in a virtual environment. Each agent operates independently in its virtual environment, interacting through its states and actions at each time step t, leading to the next state and action. State information includes data such as the positions (x, y, z) of the target object and end-effector. Based on this information, the agent determines the torque values for each motor, thereby developing the capability to generate appropriate control commands under various fault conditions.

RL mechanism of proposed system. Each agent interacts with its own environment through sequential state-action transitions based on the Markov Decision Process. The next state is determined by the agent’s action and environment dynamics. All agents share a common policy network and are trained in parallel to improve learning efficiency and generalization performance.

The robot learns to adjust the current values and movement parameters of each actuator to ensure stable movement toward the target position, even in a malfunctioning state. Each episode begins with the target object placed in a random position, requiring the robot to execute the appropriate actions to reach the goal. After performing the movement, the system evaluates the performance based on distance. If the robot successfully reaches the target position, the attempt is classified as successful, and if it failed to stabilize, it is considered a failure. At the end of each episode, a reward is assigned based on success or failure, guiding the model toward acquiring a robust control strategy.

The state variables used in the training environment consist of information obtained from real-world scenarios. The status information of each actuator, acquired through a status diagnosis model, serves as the input for the RL. The position of the target object is acquired through a camera and serves as input for RL. Additionally, the state space includes the positions of the end-effector and target. Furthermore, the distance between the end-effector and target object is incorporated into the state representation. These variables play a crucial role in enabling a robot to perceive its environment and execute appropriate actions effectively. The robot’s action space was designed to incorporate the essential factors for maintaining stable operation, even under fault conditions. It can regulate both the degree of movement and the current levels to ensure controlled execution. By leveraging these combined actions, the robot learns robust actuation strategies to reliably achieve its objectives, even in fault scenarios. One of the key factors influencing the RL performance is the reward function. In this study, both immediate and delayed reward frameworks were applied. An immediate reward is introduced to encourage the robot to move closer to its target. At each step, the distance between the target object and end-effector was measured, and a higher reward was assigned as the distance decreased. This guided the robot to navigate efficiently toward the target position. After an episode concluded, a delayed reward was provided. If the robot successfully reached the target point, it was assigned a high reward. Otherwise, the robot received a lower reward. This structure ensures that as the training progresses, the model is optimized to maintain a stable action. In this study, a multi-agent learning approach was applied, allowing multiple agents to learn independently and in parallel. Each agent trains within its own environment, but they share a common set of weights to accelerate learning and improve generalization performance. This parallel-learning framework enables the simultaneous exploration of diverse state variables, thereby facilitating the efficient acquisition of policies that yield higher rewards. Once the control policies are trained, they are deployed during the operational phase of the system. The RL module receives the real-time fault diagnosis results as input and executes control commands designed for the detected fault condition. When a fault is detected, the RL module adjusts the motor outputs to minimize the position error between the end-effector and target point. Through this process, the policies learned in the virtual environment were accurately applied to a real system, enabling the fast adaptation and stable operation of the robot. Figure 3 illustrates the multi-agent interaction and parallel data collection structure, and Algorithm 1 details the inner learning loop executed by each agent. As shown in Algorithm 1, the PPO-clip algorithm was used to stably update the policy and value networks. The PPO-clip algorithm for the adaptive control algorithm follows a structured RL approach to optimize policy updates while maintaining stability. The process begins by collecting a batch of trajectories based on interactions with the environment using the current policy. The total reward-to-go is then computed as the discounted sum of future rewards, which serves as the basis for updating the policy. To improve sample efficiency, generalized advantage estimation (GAE) is applied to estimate the advantage function, helping the agent better evaluate actions relative to the current policy. The policy update step is performed using the PPO-clip objective, which introduces a clipping mechanism to restrict drastic updates and prevent performance collapse. This ensures a more controlled and stable learning process by balancing exploration and exploitation. Additionally, the value function is updated using the mean squared error (MSE) loss, refining the state-value predictions to enhance decision-making. By incorporating these techniques, the PPO-clip approach ensures efficient and stable RL, making it particularly effective for adaptive control applications.

The RL-based adaptive control module proposed in this proposed method helps the robotic system operate reliably, even when unexpected faults occur. This ensures higher productivity and lowers maintenance costs.

PPO-clip for adaptive control

Deep learning–based state diagnosis model

Data collection and preprocessing methods

An ACS712 current sensor with a sampling frequency of 10 kHz was used to measure the current flowing through the motor under overcurrent fault conditions. During data collection, random motor commands were applied to capture diverse current variations to ensure the acquisition of both normal- and abnormal-state data. Because the raw data may contain noise and bias due to sensor characteristics and environmental factors, appropriate preprocessing steps were implemented to enhance the reliability of the analysis. First, noise filtering was applied to remove transient spikes, which could lead to the misinterpretation of current fluctuations. This step prevented false detections and ensured stable and accurate current signals. In addition, the offset correction was applied to compensate for the bias voltage of the sensor, thereby improving the accuracy of the measured current values. These preprocessing techniques minimize sensor measurement deviations and enhance data reliability for further analysis. The pre-processed voltage and current data were normalized to maintain a consistent data scale. To address the imbalance between normal and abnormal data, an oversampling technique was applied to prevent issues related to the overfitting or underrepresentation of certain classes during model training. A total of 100,000 time-series data points were collected, and a window size of 10 was set, resulting in 10,000 windows. Among these, 5000 windows were assigned to normal data and 5000 to abnormal data, ensuring a balanced representation for model training and evaluation.

Learning process of state diagnosis model

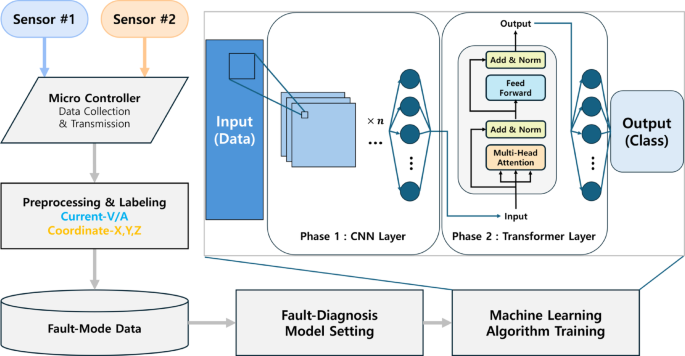

Equipment diagnosis models play an essential role in ensuring the stable operation and performance maintenance of robotic systems. Detecting and responding quickly to unexpected incidents is critical for enhancing system productivity34,35. CNN- based equipment condition diagnostic models are widely used in various industrial systems. For instance, Gao et al. proposed a model that analyzed vibration data from lift systems to detect faults36. In another study, Huang et al. combined a CNN and long short-term memory (LSTM) model with a sliding window processing technique to address the issue of time delay in fault diagnosis37. However, the sequential nature of the LSTM limits its training speed, making it less effective in promptly responding to various fault conditions. Zhenshan et al. proposed a method that transforms one-dimensional fault signals into dimensional images using a Short-Time Fourier Transform and diagnoses faults using a Transformer model38. This method achieved a high fault- classification accuracy of 98.45Thomas et al. proposed a model that combined a CNN and Transformer for fault detection and location in power lines, automatically identifying the type, phase, and location of faults39. Li et al. introduced a hybrid structure combining a CNN and Transformer Encoder, where CNN extracts local features from images, and the Transformer models these features globally to derive higher-dimensional semantics40. A CNN is advantageous for extracting spatial features from data, whereas a Transformer is effective for learning long-term dependencies in sequential data. In the CNN-Transformer model, the spatial features are first extracted through the CNN layers. Then, the Transformer layers learn the temporal dependencies to diagnose the equipment conditions. This approach leverages the strengths of both the CNN and Transformer models. This study proposes a structure that allows for the selection and training of various models tailored for specific purposes. Figure 4 illustrates the data flow and training structure of the proposed equipment condition diagnosis model. Current, voltage, and coordinate data collected from the sensors were transmitted in real time via a microcontroller and then pre-processed and labeled for training.

Hybrid fault diagnosis model.

During the training phase of the model, parameters reflecting the equipment conditions in a virtual environment were provided to enable the RL–based control module to learn various scenarios. In the operational phase of the system, the equipment condition is detected in real-time, and the detected information is supplied as input to the RL model to facilitate adaptive control. Through this approach, the proposed system plays a critical role in responding to diverse situations and supporting the stable operation of the equipment.

Unified software mapping system

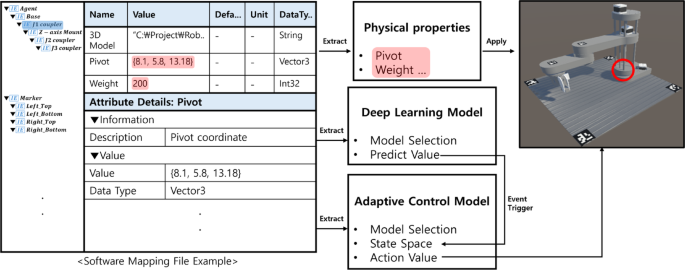

This paper proposes a methodology that uses AML files for a unified software mapping system to accurately reflect the physical properties and states of robotic systems in a virtual environment. It also enables modifications to required models and software. Building a precise virtual environment is essential for predicting robot behavior and training control policies. However, previous studies have encountered difficulties in maintaining data consistency and manual input errors, making it difficult to synchronize virtual and real environments. To address these issues, this study constructed a virtual environment by automatically parsing AML files, reflecting the physical properties and states of the robot, and proposed a flexible system capable of generating adaptive control modules. Utilizing a unified SDM mapping file, the system enables seamless adaptation to diverse conditions, thereby enhancing the flexibility and scalability of the learning framework. In addition, a digital twin serves as a comprehensive representation of real-world information by integrating all relevant data to create a highly accurate and adaptable simulation environment. This ensures that the RL model can be effectively generalized across different manufacturing scenarios. The proposed integrated AML file contains the structural attributes of the robot, such as the 3D model information, scale, pivot coordinates, and part weights, offering flexibility to accommodate design changes. Figure 5 demonstrates the implementation of AML files in a virtual environment using the Unity engine. The information parsed from the AML files reflects precise component placements, such as pivot coordinates (8.1, 5.8, and 13.18), ensuring that each component is arranged according to its designated position and structure.

Physical properties reflection and software mapping process.

By utilizing an automated parsing process based on AML files, the proposed method reduces errors and ensures data consistency, thereby providing a stable environment for control policy training. Automated data parsing using AML files prevents data loss, maintains consistency in model construction, and reduces errors caused by manual input. In addition, the system provides scalability to adapt to design changes and fault scenarios. For example, when new scenarios arise or component replacements are required, the virtual environment can be updated quickly by modifying the AML file. In addition, a pipeline was established to transfer the output of the deep learning model, such as fault labels, to the adaptive control module through event triggers. This architecture allows for self-learning control based on the status of the diagnosed equipment. The models developed within this structure can be applied to both virtual and real environments, offering a method for simulating and addressing complex issues that may arise in physical systems. This approach minimizes errors and risks in real-environment operations, ensuring that optimized control policies can be effectively implemented in the actual system, and enables precise integration between physical systems and the virtual environment, allowing for flexible adaptation to various design changes or scenarios through the retraining and modification of the model. This ensures consistency in the learning and execution of control policies, supporting stable and efficient robot operations, even under fault conditions.

Calibration and interfaces for real environment

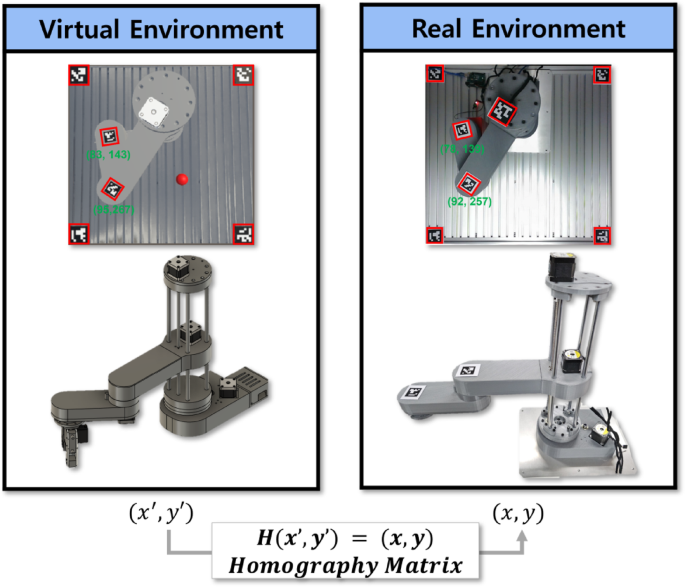

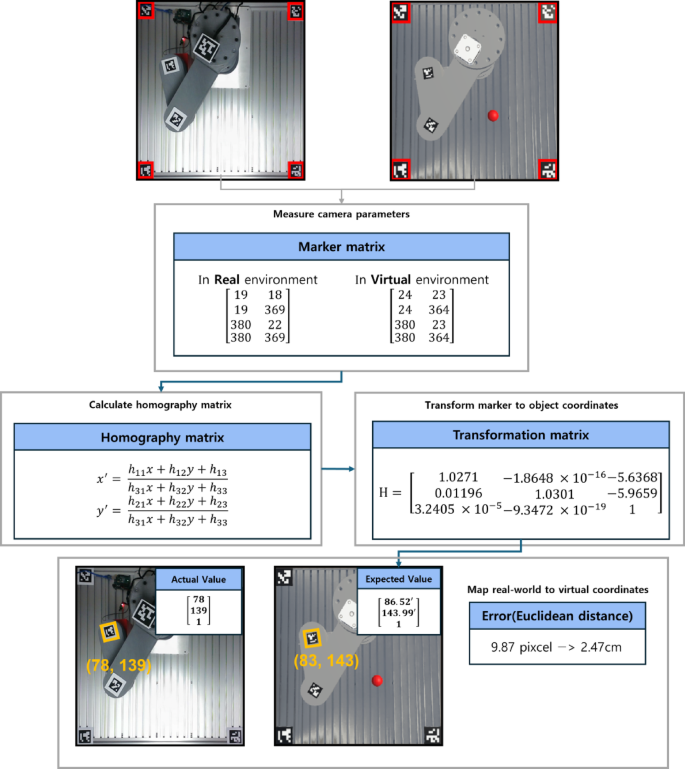

Precise synchronization between virtual and real environments is crucial. This ensures that the control policies learned in the virtual environment can be correctly applied to real robotic systems41. Even small discrepancies in the coordinates between the two environments can reduce control performance. These differences make it more difficult for the system to respond quickly to abnormal responses. To address these issues, this paper proposes a synchronization system that uses 2D markers and homography matrix transformations. This system aligns the coordinates of both environments, ensuring that the control commands from the virtual environment are accurately applied to the real system. Two-dimensional (2D) markers are widely used as visual feedback tools in computer vision. Each marker encodes a unique ID and coordinate information, enabling the precise tracking of an object’s position and orientation42,43,44. In this study, markers were placed on the key robot components and within the surrounding environment. This ensured that both the virtual and real environments were aligned using the same reference points. This setup integrated the coordinate systems of the two environments. Consequently, the control commands learned in the virtual environment were accurately transmitted to the real system. Figure 6 illustrates the calibration process. The markers were positioned at identical locations in both virtual and real environments to maintain coordinate consistency. This ensures that the learned control commands are executed according to precise spatial references, thereby minimizing the potential errors caused by misalignment between the two environments. This marker-based approach allows real-time control commands to be transmitted without delay and ensures stable robotic operation, which is consistent with previous studies emphasizing robust marker-based calibration for aligning virtual and physical environments45.

Marker-based calibration alignment process. Figure illustrates how coordinate systems between the virtual and real environments are aligned to ensure accurate control execution. Identical 2D markers are placed in both environments to establish shared reference points. Homography matrix is computed to map coordinates from the virtual environment (x′, y′) to the real-world coordinates (x, y). This calibration process enables precise application of control commands learned in simulation to real robotic systems.

Homography matrix transformations refine the alignment between virtual and real environments by performing planar transformations47. This ensures that the coordinates in the virtual environment are accurately mapped to their real-environment counterparts. This process minimizes misalignment and improves the accuracy of the control commands. For example, if a coordinate error occurs while the end-effector moves toward its target, the transformation ensures that stable control is maintained. This synchronization system guarantees the precise transmission of control commands and accurate coordinate alignment between the virtual and real environments. As a result, the robotic system maintains stable operation. The system applies RL–based control policies to a virtual environment, thereby ensuring consistent performance and maximizing reliability. The use of markers and homography-based calibration minimizes control errors, enabling fast and precise fault responses for stable and efficient operations.

Prototype implementation

The performance of the proposed system was analyzed through a case study focused on fault scenarios. An overcurrent occurs when excessive current flows into the motor, causing an overload. This condition can lead to reduced motor speed or complete motor stoppage. If left unresolved, it can degrade system efficiency and cause damage to the motor or drive system over time. Therefore, early detection and appropriate control measures are essential to prevent such issues. Current sensors are used to monitor overcurrent conditions as the motor output increases. These sensors measured the motor load status in real time and recorded changes in current levels under varying output conditions. The collected data were essential for training the fault diagnosis model. Multiple experiments were conducted under different output conditions to observe changes in motor behavior during overcurrent events, ensuring reliable data collection for fault scenarios. The collected component-level data were used to train the fault diagnosis model, which accurately identified motor faults. Based on this diagnosis, the adaptive control module generates the appropriate control commands in real time. This section provides a comprehensive evaluation and analysis of the system performance. First, the performance of the fault diagnosis model was evaluated. The accuracy, prediction speed, and model size of the CNN-Transformer hybrid model were compared with those of other models to highlight its superior performance. This comparison demonstrates the advantages of the CNN-Transformer model over the single CNN or Transformer models, particularly in terms of accuracy and reliability in fault detection. Next, the performance of the adaptive control module was analyzed. The RL models PPO and SAC, were compared to assess how they handle robot control commands. Whereas PPO offers better learning stability and efficiency, SAC provides stronger exploration capabilities but suffers from slower learning speeds, making it less effective in real-time control scenarios. This comparison explains why PPO outperformed SAC in robotic control tasks. Finally, the applicability and efficiency of the trained modules are evaluated in real fault environments. The fault diagnosis model and adaptive control module were applied to an actual system to assess their ability to detect and respond to faults in real-time. This evaluation examines how control commands learned in a virtual environment are transmitted to a physical robot controller, and how the calibration process aligns the virtual and real environments. The focus was on assessing the responsiveness and accuracy of the system in handling real fault scenarios and demonstrating its applicability and stability in real- time operations. The results demonstrate that the proposed digital twin-based fault-adaptive control system outperforms traditional systems in diagnosing and responding to faults in real time. This highlights the ability of the system to deliver superior performance across various industrial environments, ensuring both reliability and efficiency in operations.

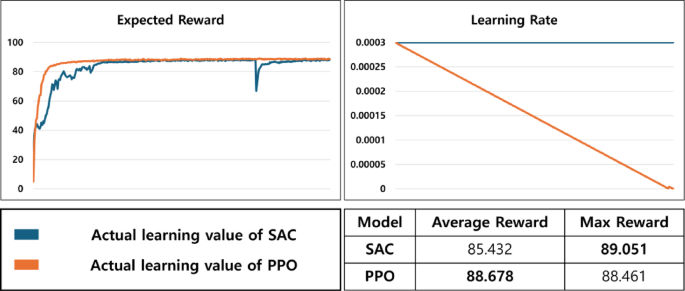

Performance of self-adaptive control module

This study conducted comparative experiments between the PPO and SAC algorithms to evaluate the performance of a RL–based fault-adaptive control module. Both algorithms were trained to learn the control policies under fault conditions, and their adaptability and learning efficiency were analyzed through a performance comparison. As shown in Fig. 7, the PPO rapidly converges early in the training process, maintaining stable rewards and achieving higher average rewards46. By contrast, SAC, owing to its exploration-based learning, has a longer exploration time and exhibits variability in its learning process. This behavior can be attributed to SAC’s exploration of a wide range of policy changes. SAC’s tendency to explore diverse policy changes can be advantageous in complex environments47. However, when rapid adaptation is required in fault scenarios, PPO provides faster and more stable learning results than SAC.

Comparison of learning curves between PPO and SAC algorithms.

When the control policies trained by PPO and SAC were applied to the virtual environment, both models achieved a 100% success rate in reaching the target. This indicates that the RL-based control modules performed reliably across all episodes without failure. These results demonstrate the stability of the control policies learned in the virtual environment and confirm the consistency and reliability of the real-time adaptive control system. In comparative experiments, PPO outperformed SAC by achieving faster convergence and maintaining higher rewards, making it more suitable for learning control policies under fault conditions. As shown in Fig. 7, PPO exhibited rapid policy loss reduction during training, with stable policy loss and entropy. This indicates that PPO quickly completed exploration and established a stable policy, enabling rapid learning of adaptive control for fault scenarios. PPO also demonstrated a gradual decrease in learning rate throughout the training process. This allowed rapid exploration in the early stages and fine-tuning for stable performance in later stages. In contrast, SAC maintains a fixed learning rate, leading to continuous exploration but at a slower pace. A performance comparison between PPO and SAC confirmed that PPO offers faster learning speeds and greater stability. Although extensive exploration of SAC can be advantageous in complex environments, PPO has proven to be a better choice for real-time adaptive control in fault scenarios. These findings suggest that PPO is more effective for applications requiring quick and precise control, such as fault-responsive robotic systems. The RL–based fault-adaptive control module (developed in this proposed method) successfully demonstrated its effectiveness in a virtual environment, allowing for the selection and training of models based on the objectives of optimizing and maintaining stable reward policies.

Performance of fault diagnosis model

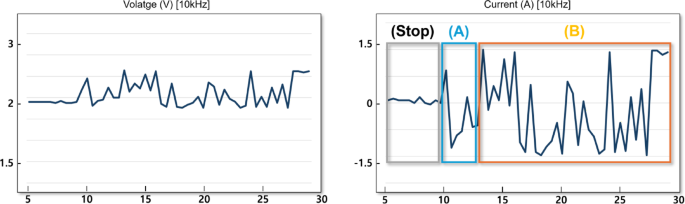

The collected data were used as primary data to learn the fault diagnosis model. In this study, current data were collected through a sensor from a motor, which is a part of the robot and Fig. 8 shows the results of visualizing the current and voltage data collected in normal mode among the experimental results in various power modes. The picture below Fig. 8 is largely divided into three parts. The stop section is where the robot is waiting, section (A) is where it operates normally, and section (B) is where failure occurs. Normal and failure data were collected by labeling the data.

Examples of collected sensor data for fault analysis (Left: voltage data, Right: current data).

The sensor data were collected in units of 10 Hz, and the window size was set to 10, which was designed to determine whether a failure occurred based on 1 s of real-time data. The collected data included time-series patterns of current fluctuations and failure situations. The CNN-Transformer hybrid model proposed in this study was configured to handle such time-series data more effectively. CNN extracts important spatial features from sensor data, while the Transformer performs well in learning the long-term dependencies between time-series data. The integration of the two models resulted in improved accuracy compared to the use of individual models. The experiment was conducted on an NVIDIA 4070 GPU with a 13th Gen Intel(R) Core(TM) i5-13600KF processor, using 10,000 data samples, a batch size of 16, a window size of 10, and 100 epochs. Table 1 presents a comparative analysis of the performance of the CNN, Transformer, and CNN-Transformer hybrid models. Table 1 includes accuracy, prediction speed, size, and complexity of the model. The results indicated that the CNN-Transformer hybrid model achieved the highest accuracy, whereas the CNN model, with the smallest size demonstrated the fastest prediction speed

Based on these findings, this study suggests that fault diagnosis models can be selected according to the specific requirements of the situation. The CNN-Transformer hybrid model is recommended for scenarios requiring high accuracy. Conversely, when rapid prediction speed is essential, the CNN model is the optimal choice. The model size can also be appropriately considered and utilized depending on the requirements of the application. The fault diagnosis model simulates a fault scenario by constructing various virtual environments in the learning stage, and uses the results as input for a RL–based control model. The RL model adjusts the state of the robot by generating a control command based on fault diagnosis results.

Evaluation of adjustments between real and virtual environments

This study evaluated the performance of an adaptive control model learned in a virtual environment by applying it to real fault scenarios. The adjusted values from the unified SDM mapping file were loaded and applied, followed by calibration. The experiment analyzed how precisely the coordinate alignment between the virtual and real environments was achieved and assessed whether the model functioned appropriately in a real environment. To achieve this, a calibration process based on 2D markers was conducted, and a homography matrix was calculated to minimize the differences between the two environments. To align the coordinates of the virtual and real environments, four 2D markers with unique IDs were placed at identical positions in both environments. A homography matrix was then derived based on these markers. Figure 9 shows the positions of the 2D markers in both real and virtual environments, as well as the coordinates used to align the two coordinate systems.

Marker-based spatial calibration. Detailed view of spatial calibration process using 2D markers, aligning virtual and real coordinates for accurate control execution.

The homography matrix aligned the coordinate systems, resulting in a Euclidean distance of 9.87 pixels (equivalent to 2.47 cm) between the real-environment coordinates and the expected coordinates from the virtual environment. This error highlights the subtle differences that may arise when control models trained in a virtual environment are applied to a real system, suggesting the need for additional adjustments. Further calibration and correction algorithms could be introduced to reduce these errors. After completing the calibration, the RL-based adaptive control model trained in the virtual environment was applied to a real system to evaluate its performance. Although minor discrepancies remained between the two coordinate systems, the proposed system maintained high accuracy and successfully reached the target position. However, the accumulated effect of these small errors during real-time control could potentially impact performance, indicating the need for further refinement. These results confirm the potential of the proposed digital- twin-based adaptive control system to provide fast and accurate responses in real fault scenarios. With additional calibration efforts to reduce coordinate alignment errors, the system’s performance can be further enhanced.

Validation through case study analysis

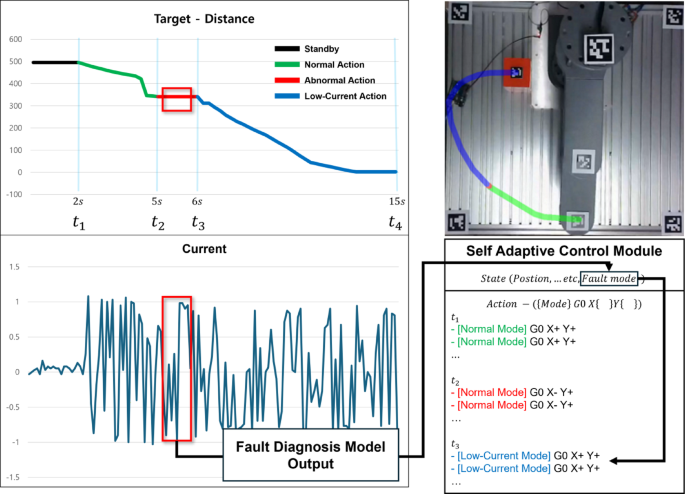

This section presents the results of the case study conducted to validate the artificial intelligence system developed in the previous sections. The objective of the case study scenario, as illustrated in Fig. 10 and Supplementary video 1, is to ensure that the system safely reaches the target point indicated by the red box while flexibly adapting to faults and completing the task without interruption. The supplementary file provides a video recording of the moment of the real-time mode shift (Supplementary video 1). The proposed fault diagnosis and adaptive control system was designed to demonstrate its response to fault scenarios and its capability to maintain stable and continuous operation. This scenario demonstrates the ability of the system to maintain reliable operation even under fault conditions. A key feature of the proposed system is its ability to adjust the control commands in real time based on the results of the fault diagnosis enabled by an RL-based control module. Furthermore, this adaptive process is supported by the precise synchronization between virtual and real environments. The calibration process ensured that the control commands learned in the virtual environment were accurately applied to the physical system. This synchronization is crucial, particularly during fault conditions, as it ensures that the real system mirrors the fault-tolerant strategies developed in the virtual environment.

Adaptive fault response scenario. Step-by-step illustration of fault response process, including fault detection, adaptive control adjustments, and successful task completion under fault conditions (Supplementary video 1).

The result of the case study shows the following:

t-t1 (0–2s): In this phase, the system is in a standby state, ready to operate. The fault diagnosis model and RL-based control module were connected and prepared for operation.

t1-t2 (2–5s): The robot begins normal operation, following the green path toward the target point. At each moment, the fault diagnosis model evaluates the current state by receiving fault mode inputs. If the system functions normally, the control commands proceed without any adjustments.

t2-t3 (5–6s): The proposed system is designed to rapidly detect and respond to errors occurring along the red path. The CNN-Transformer-based fault diagnosis model identifies errors within one second and transmits the fault information in real time to the RL-based control module. Using this information, the control module promptly adjusts its control strategy and initiates fault-tolerant responses to ensure continuous system operation. The seamless interaction between fault detection, control adaptation, and virtual-to-real synchronization enhances the system’s stability, enabling uninterrupted operation even in the presence of faults. Unlike conventional predictive maintenance systems that halt operations upon fault detection, the proposed system actively detects faults and responds in real time, thereby ensuring a more reliable operation.

After t3 (6–15s): When an error occurs, the control module swiftly switches the system to a low-power mode and adjusts its trajectory along the blue path to safely reach the target point. The RL–based control module adapts in real time, transitioning to a low-power mode within one second of fault detection, thereby minimizing the impact of errors while maintaining task execution. This adaptive control approach allowed the system to sustain high task accuracy, achieving an average deviation of 5–8 pixels (1.25–2 cm) while successfully reaching the target. Consequently, the system remained stable even under faulty conditions, ensuring minimal deviation from the target and reliable task execution.

The following aspects must be addressed to improve the performance of the case study: By integrating CNN-Transformer- based fault detection, the system improves the accuracy of fault identification. Additionally, RL enables real-time adaptive control, allowing the system to respond dynamically to changing conditions. Regarding the fault response speed, the system demonstrated the ability to detect faults within 1–1.5 s after occurrence. Although this delay is small, it may affect certain real- time control scenarios. To address this issue, future research should focus on optimizing the trade-off between model accuracy and window size. Reducing the window size can accelerate fault detection while maintaining model accuracy. Moreover, incorporating high-precision sensors capable of detecting faults with greater accuracy can enhance system performance. Another factor contributing to control delays is the limitation of communication systems. The system currently relies on serial communication, which introduces latency to the delivery of control commands after a fault is detected. A potential solution is to replace this with a high-speed industrial communication system such as Ethernet. Ethernet supports faster data transmission and improves the response time of the system, leading to more reliable real-time adaptive control.

link