A new algorithm has been designed to enable robots to make safer decisions around humans.

In a factory setting, robots and humans can form strong teams. The robot handles repetitive tasks like assembly with speed and precision, while the human performs complex duties like quality control.

Humans, by nature, are prone to mistakes, to the unexpected.

These unpredictable actions can throw even the most sophisticated robot off course, creating situations it isn’t programmed to handle.

The results, as we know, can be tragic.

An example of the risks is the 2023 incident in South Korea, where an industrial robot fatally crushed a worker at a vegetable packaging plant.

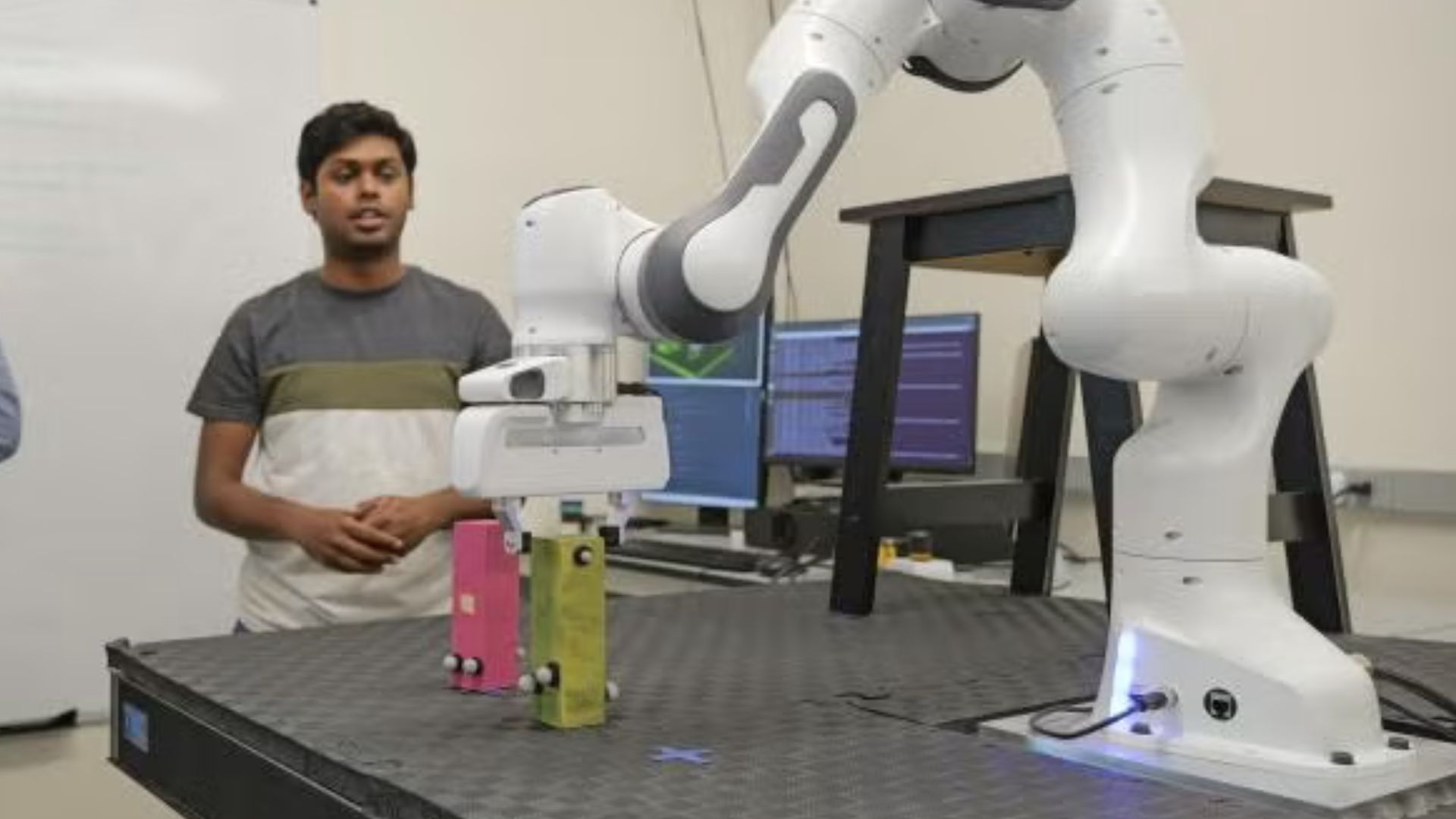

The University of Colorado Boulder team worked to bridge this gap of uncertainty. They have proposed this new algorithm to help robots make safer, smarter decisions around humans, even amidst the greatest uncertainties.

Prioritizing safety

Similar to how humans make decisions, robots use mental models to predict human actions and react.

In a new study, Professor Lahijanian and his graduate students devised algorithms, drawing inspiration from “game theory” – a concept that analyzes strategic decision-making.

Researchers applied game theory to this problem, treating the robot as a player trying to “win” a game.

However, because a win isn’t guaranteed with a human involved, the goal shifted from winning to finding an “admissible strategy,” where the robot completes as much of its task as possible while minimizing any potential harm to the person.

“In choosing a strategy, you don’t want the robot to seem very adversarial,” said Lahijanian, an associate professor at CU.

“In order to give that softness to the robot, we look at the notion of regret. Is the robot going to regret its action in the future? And in optimizing for the best action at the moment, you try to take an action that you won’t regret,” said Lahijanian.

Collaborative workspace

The team envisions a robot using these new algorithms that would respond intelligently.

Much like a chess player, the system is designed to stay a step ahead of a person, but its true priority is human safety, not perfect prediction.

If a robot’s human partner makes a mistake or acts unpredictably, the robot’s first move will be to correct the issue safely.

If that fails, the robot could take proactive steps—like moving its work to a safer location—to finish the task without endangering the person.

The ultimate goal is clear: robots must adjust to humans, not vice versa.

“You can have a human who is a novice and doesn’t know what they’re doing, or you can have a human who is an expert. But as a robot, you don’t know which kind of human you’re going to get. So you need to have a strategy for all possible cases,” Lahijanian added.

The robots master monotonous, repetitive tasks, bringing speed and unwavering accuracy.

The author believes robots and AI can enhance human abilities when used correctly.

The robots could fill labor shortages in fields like elder care and take on physically demanding jobs that harm human health.

This would free us to concentrate on our unique human strengths like intelligence, judgment, and creativity.

The findings were presented at the International Joint Conference on Artificial Intelligence in August 2025.

link